Table of Contents

Capcom Sets a Clear Policy on Generative AI in Shipped Games

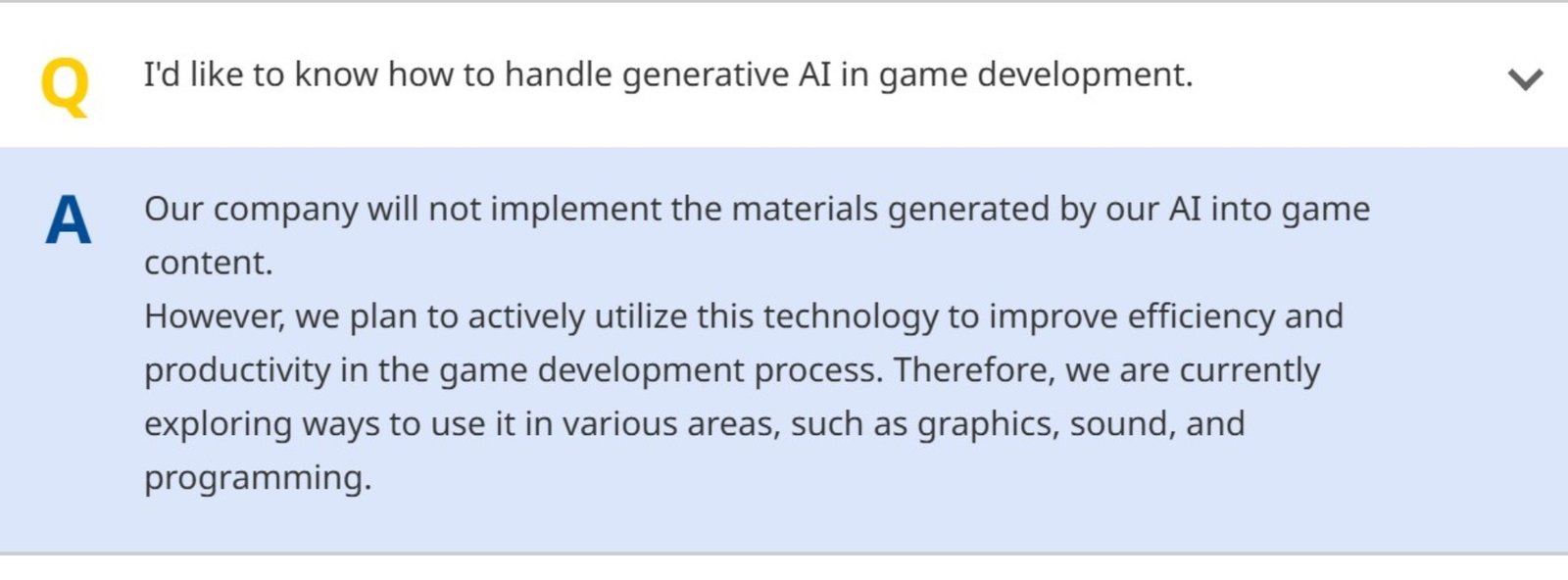

Capcom has drawn a firm line on generative AI content in its released games, stating it will not incorporate generative AI-generated assets into its game content. The clarification came during a recent investor Q&A, landing at a moment when publishers are being pushed to explain where they stand as AI tools become more common across development pipelines.

The statement matters because the industry conversation has shifted. The debate is no longer only about whether studios use AI during production, but also about whether AI-generated material ends up in the final shipped experience and whether audiences are told when it does.

In recent months, multiple games have faced scrutiny over suspected AI art or AI-touched assets, with developers either issuing denials or apologising and committing to replacements. The company is attempting to avoid that grey zone by publicly defining its boundary.

A Hard Split Between Production Tools and Final Content

Capcom’s position is not a blanket rejection of AI. The company says it will continue using AI technologies internally to improve efficiency and productivity. It has signalled exploration across departments, including graphics, sound, and programming, which aligns with how many studios are approaching AI today: as workflow support rather than a replacement for authored art and writing.

That split is easy to state, but harder to operationalise. Once AI tools are used inside a pipeline, studios still have to enforce asset provenance, review processes, and sign-off standards to ensure nothing AI-generated slips into final builds by accident. This statement effectively raises the bar for its own internal governance, because it has now set a public expectation that can be tested by the community.

Why Capcom Is Taking This Stance Now

There is a practical reputational angle here. Capcom is one of the few large publishers to have maintained a steady rhythm of releases and updates without routinely walking into avoidable public controversies. Declaring a no-generative-AI-assets policy in final content reduces one emerging risk area, especially as players and platform holders pay closer attention to what counts as authentic production.

It also positions Capcom against a broader backdrop where platform policies and store requirements are evolving. Disclosure standards remain inconsistent across the market, and that ambiguity has helped fuel suspicion whenever something looks off. Capcom’s approach attempts to replace ambiguity with a simple claim: AI may be used for efficiency, but the shipped content is not generated by it.

The policy will only be meaningful if it remains consistent across future releases and communications. The biggest open question is how Capcom defines “game content” at the edges, including marketing materials, localisation workflows, and production placeholders that might appear in public demos.